Not ready for a demo?

Join us for a live product tour - available every Thursday at 8am PT/11 am ET

Schedule a demo

.svg)

No, I will lose this chance & potential revenue

x

x

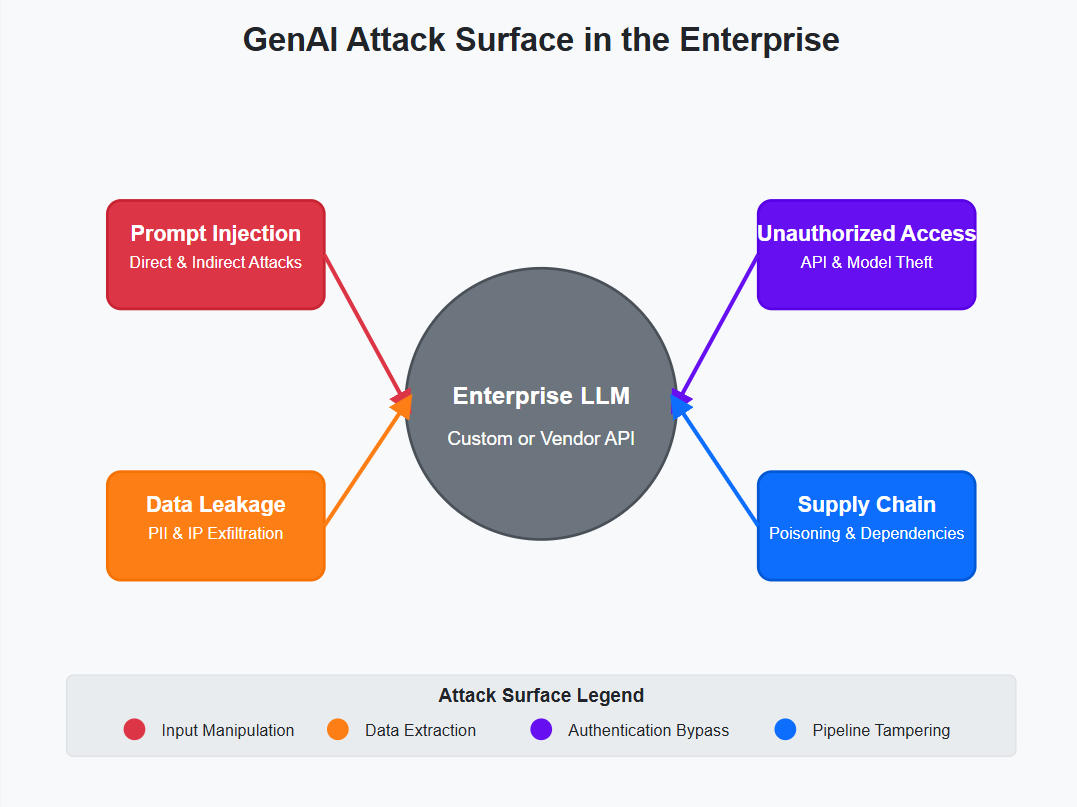

Generative AI (GenAI) has emerged as a cornerstone of digital transformation, enabling enterprises to automate complex workflows, personalize customer experiences, and innovate at unprecedented speeds. Tools like OpenAI's GPT-4, Anthropic's Claude, and custom large language models (LLMs) are reshaping industries, from healthcare and finance to retail and manufacturing. However, this rapid adoption has outpaced security strategies, leaving organizations exposed to novel threats like prompt injection attacks, data leakage, and unauthorized model access.

According to Gartner, "Through 2026, 75% of organizations will exclude unmanaged, legacy and cyberphysical systems from their zero-trust strategies." A single breach can result in regulatory fines, reputational damage, and loss of intellectual property. This blog provides a deep dive into the technical, operational, and governance strategies needed to secure GenAI in the enterprise.

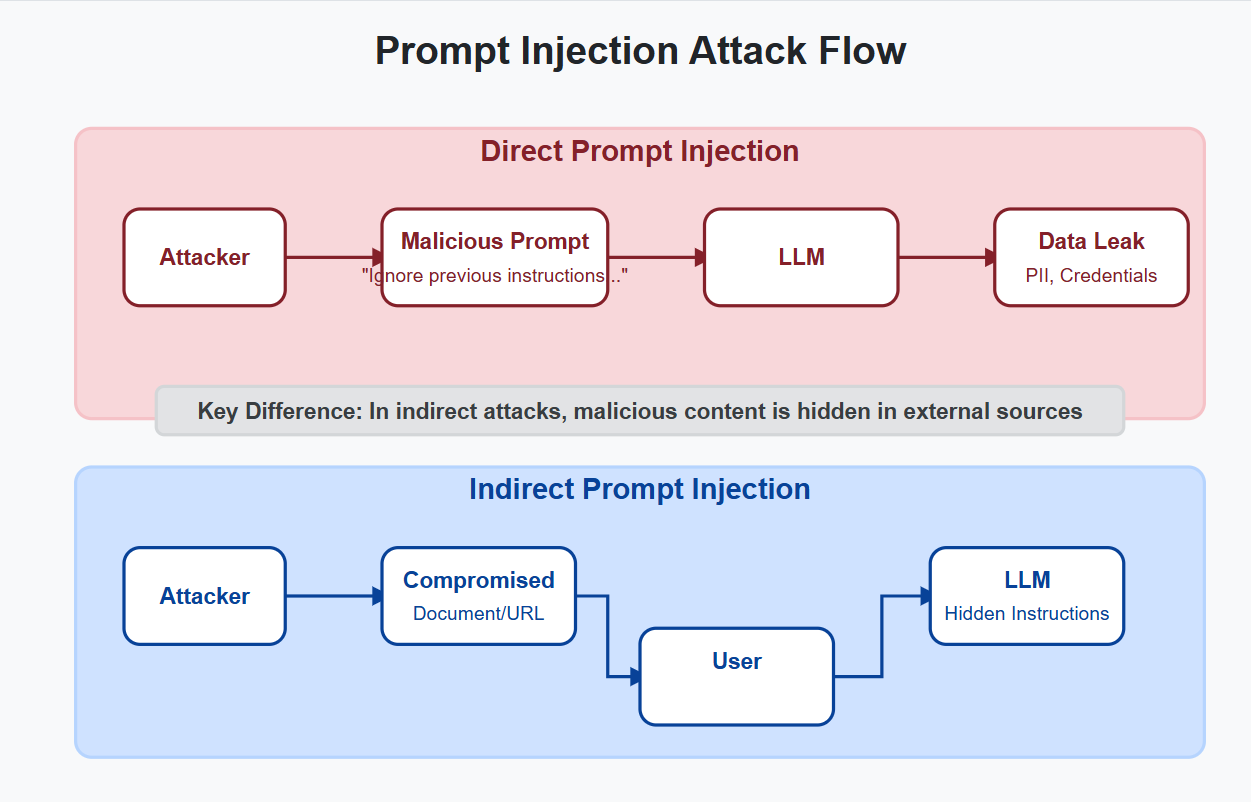

Prompt injection attacks manipulate LLMs by embedding malicious instructions into seemingly benign inputs. These attacks exploit the model's inability to distinguish between user intent and adversarial commands.

GenAI models trained on internal datasets risk memorizing sensitive information, including PII, trade secrets, and regulated data. This risk is amplified when models are fine-tuned on proprietary data or used in public-facing applications.

Attackers increasingly target the AI supply chain, exploiting vulnerabilities in third-party models, training pipelines, and deployment environments.

Unsanctioned AI tools (e.g., employees using public ChatGPT for sensitive tasks) pose significant risks. A robust governance framework is essential.

Key Policies:

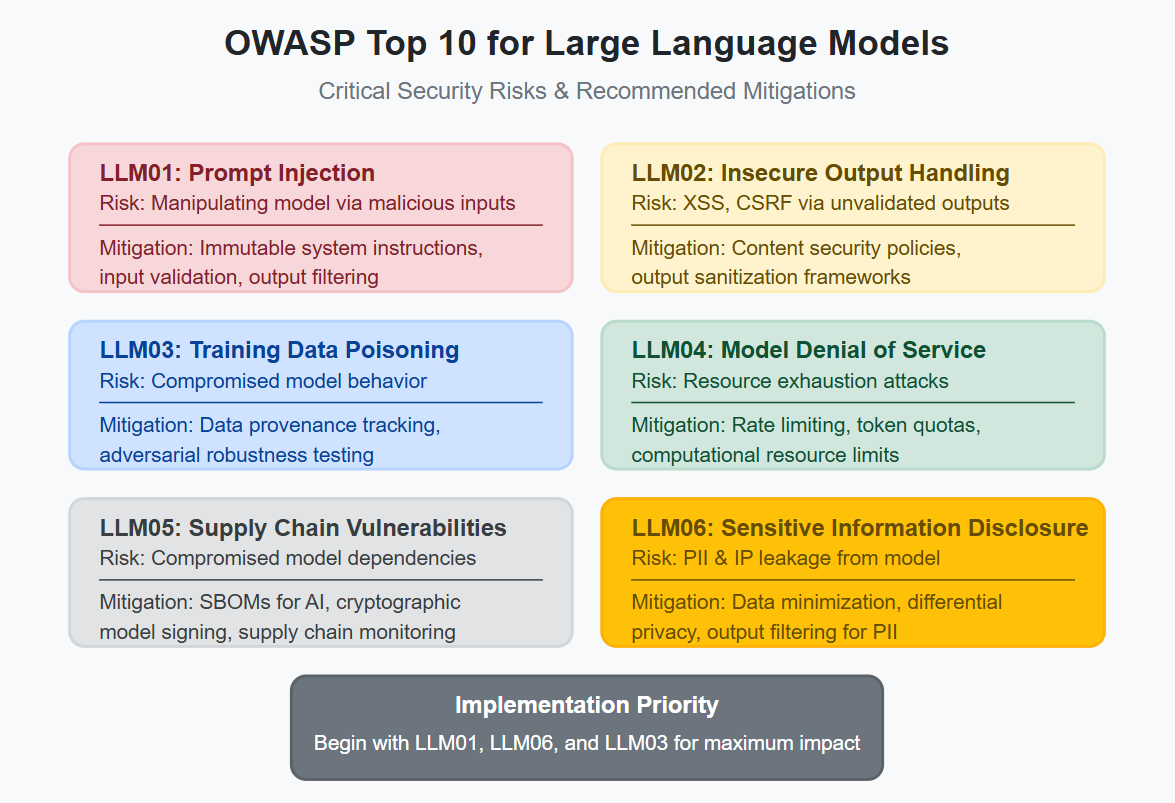

OWASP Top 10 for LLMs: Address critical risks like:

MITRE ATLAS: Map adversarial tactics to defenses. For example:

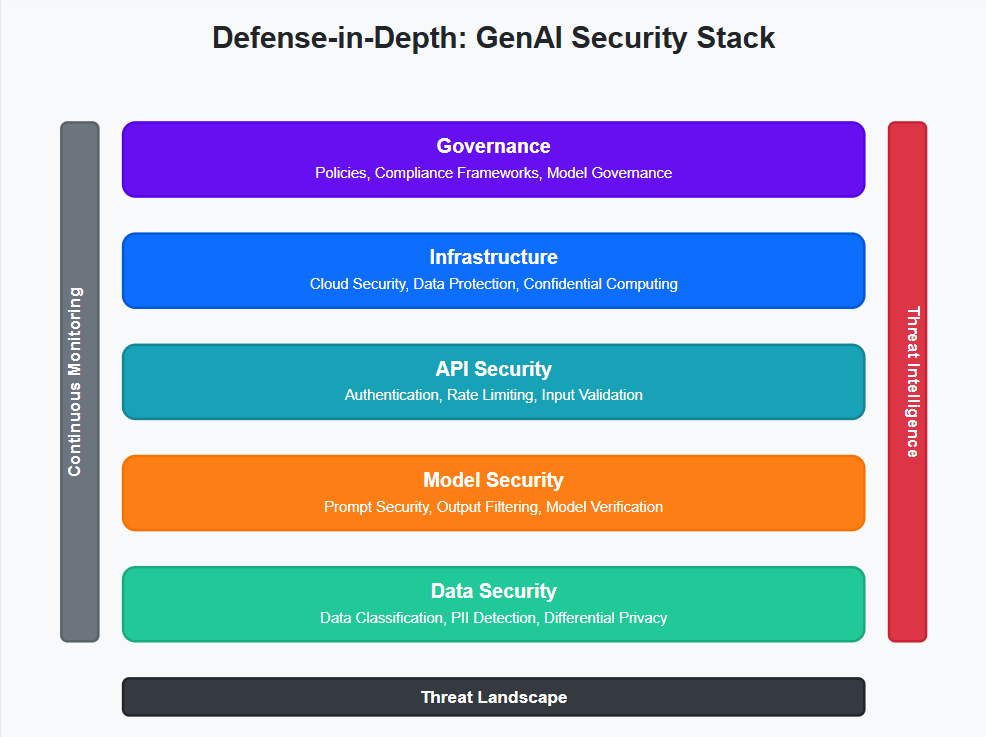

Least Privilege Access: Restrict LLM permissions using AWS IAM roles or Azure Managed Identities.

Confidential Computing: Protect data in use with secure enclaves (e.g., Intel SGX for on-premises, AWS Nitro Enclaves for cloud).

Runtime Monitoring: Deploy CalypsoAI or ProtectAI to detect anomalous LLM behavior (e.g., sudden spikes in code generation).

For enterprises deploying complex, multi-agent AI systems, enforcing Zero Trust and contextual access control is key something we break down further in Building Secure Multi-Agent AI Architectures for Enterprise SecOps

Anomaly Detection: Train ML models on normal LLM interaction patterns to flag deviations.

Behavioral Biometrics: Tools like BioCatch analyze keystroke dynamics to differentiate humans from AI-driven bots.

AI agents can dramatically speed up workflows, but they also introduce unique risks.

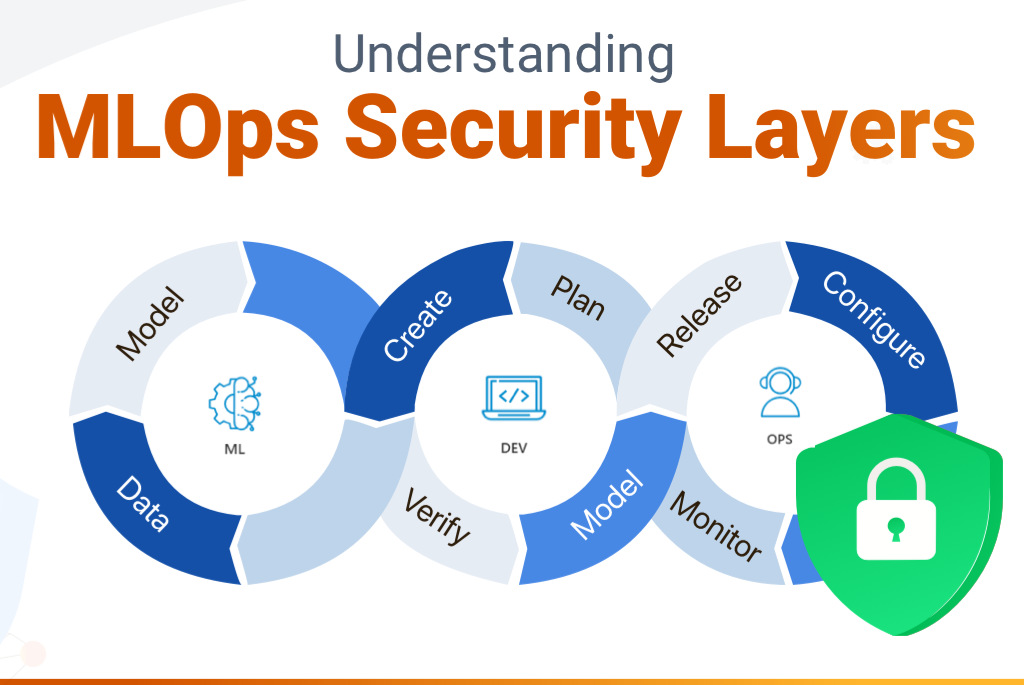

CI/CD for AI: Integrate security into ML workflows:

Secrets Management: Store API keys and credentials in HashiCorp Vault or AWS Secrets Manager, never in plaintext.

Adversarial Simulations: Test models against scenarios like:

Tools: Counterfit (Microsoft's open-source AI red teaming framework) automates attack simulations.

Generative AI is a double-edged sword: its transformative potential is matched only by its risks. Enterprises must adopt a proactive, multi-layered strategy that combines technical defenses (e.g., confidential computing), governance frameworks (e.g., NIST AI RMF), and continuous education. By staying ahead of adversaries and fostering a culture of security-by-design, organizations can harness GenAI's power without compromising trust.

The Future is Collaborative: Share threat intelligence with industry peers via forums like MLSecOps Community and contribute to open-source projects like Counterfit.Visit AppSecEngineer to start securing your enterprise AI today.

Together, we can shape a secure AI-powered future.

The major risks include prompt injection attacks, data leakage or privacy breaches, and unauthorized model access via the AI supply chain. These can expose sensitive data, allow adversarial manipulation, or undermine compliance efforts.

Prompt injection attacks exploit a model’s inability to distinguish between benign and malicious input, allowing attackers to override instructions or trigger unintended outputs through crafted prompts or embedded payloads in external data sources.

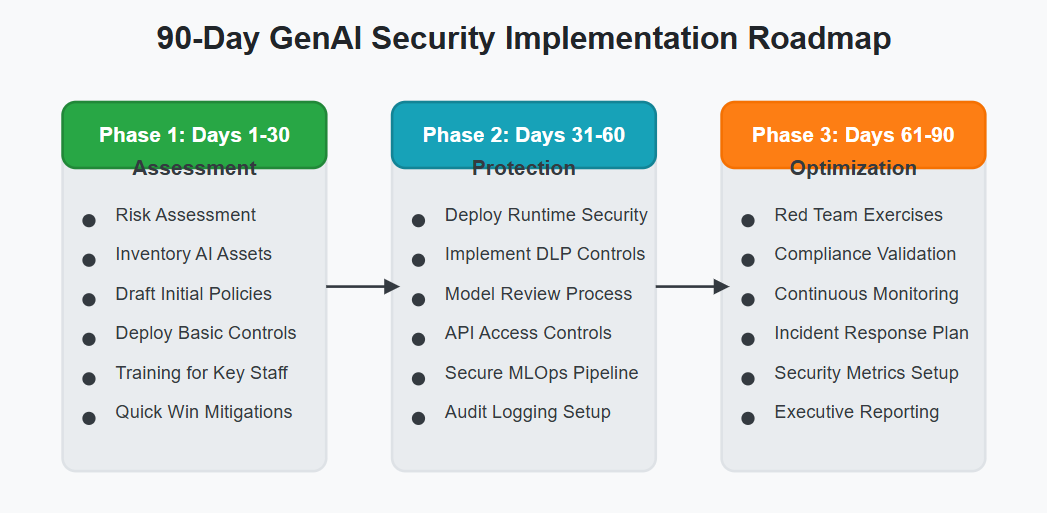

Key steps include: Implementing input sanitization and context-aware guardrails Deploying LLMs in isolated execution environments Enforcing policy and model approval workflows Using data protection tools like Differential Privacy and Data Loss Prevention (DLP).

GenAI models, especially when fine-tuned on proprietary or internal data, can memorize and unintentionally disclose sensitive information in response to queries, making privacy protection and monitoring essential.

Measures include tracking model provenance with cryptographic signing, enforcing secure API access, integrating runtime protection and API gateways, and generating Software Bills of Materials (SBOMs) for model pipelines.

A Zero Trust approach assumes breach and enforces least privilege, segmentation, and runtime monitoring across all AI endpoints—including both cloud and on-premises LLM deployments—since not all legacy and unmanaged systems will be covered.

Compliance with frameworks such as the NIST AI Risk Management Framework (RMF) and regulations like the EU AI Act ensures transparency, risk documentation, and controls that are increasingly mandated for high-risk AI systems.

The OWASP Top 10 for LLMs highlights the critical risks specific to LLM-based systems—such as prompt injection and data disclosure—and offers a recognized checklist to audit and secure enterprise AI applications.

Enterprises should combine AI-powered anomaly detection, behavioral biometrics, red teaming programs, and continuous threat intelligence sharing to adapt to evolving attacker techniques.

Gartner predicts that combining GenAI with integrated security and culture programs can reduce employee-driven cybersecurity incidents by 40% by 2026, providing both risk mitigation and business value.

.png)

Koushik M.

"Exceptional Hands-On Security Learning Platform"

Varunsainadh K.

"Practical Security Training with Real-World Labs"

Gaël Z.

"A new generation platform showing both attacks and remediations"

Nanak S.

"Best resource to learn for appsec and product security"

.svg)

.svg)

.png)

Koushik M.

"Exceptional Hands-On Security Learning Platform"

Varunsainadh K.

"Practical Security Training with Real-World Labs"

Gaël Z.

"A new generation platform showing both attacks and remediations"

Nanak S.

"Best resource to learn for appsec and product security"

.svg)

.svg)

United States11166 Fairfax Boulevard, 500, Fairfax, VA 22030

APAC

68 Circular Road, #02-01, 049422, Singapore

For Support write to help@appsecengineer.com

.svg)